Package tomotopy

Python package tomotopy provides types and functions for various topic models

including LDA, DMR, HDP, MG-LDA, PA and HPA. It is written in C++ for speed and provides a Python extension.

What is tomotopy?

tomotopy is a Python extension of tomoto (Topic Modeling Tool) which is a Gibbs-sampling based topic model library written in C++.

It utilizes a vectorization of modern CPUs for maximizing speed.

The current version of tomoto supports several major topic models including

- Latent Dirichlet Allocation (

LDAModel) - Labeled LDA (

LLDAModel) - Partially Labeled LDA (

PLDAModel) - Supervised LDA (

SLDAModel) - Dirichlet Multinomial Regression (

DMRModel) - Generalized Dirichlet Multinomial Regression (

GDMRModel) - Hierarchical Dirichlet Process (

HDPModel) - Hierarchical LDA (

HLDAModel) - Multi Grain LDA (

MGLDAModel) - Pachinko Allocation (

PAModel) - Hierarchical PA (

HPAModel) - Correlated Topic Model (

CTModel) - Dynamic Topic Model (

DTModel) - Pseudo-document based Topic Model (

PTModel).

Getting Started

You can install tomotopy easily using pip. (https://pypi.org/project/tomotopy/) ::

$ pip install --upgrade pip

$ pip install tomotopy

The supported OS and Python versions are:

- Linux (x86-64) with Python >= 3.6

- macOS >= 10.13 with Python >= 3.6

- Windows 7 or later (x86, x86-64) with Python >= 3.6

- Other OS with Python >= 3.6: Compilation from source code required (with c++14 compatible compiler)

After installing, you can start tomotopy by just importing. ::

import tomotopy as tp

print(tp.isa) # prints 'avx2', 'avx', 'sse2' or 'none'

Currently, tomotopy can exploits AVX2, AVX or SSE2 SIMD instruction set for maximizing performance.

When the package is imported, it will check available instruction sets and select the best option.

If tp.isa tells none, iterations of training may take a long time.

But, since most of modern Intel or AMD CPUs provide SIMD instruction set, the SIMD acceleration could show a big improvement.

Here is a sample code for simple LDA training of texts from 'sample.txt' file. ::

import tomotopy as tp

mdl = tp.LDAModel(k=20)

for line in open('sample.txt'):

mdl.add_doc(line.strip().split())

for i in range(0, 100, 10):

mdl.train(10)

print('Iteration: {}\tLog-likelihood: {}'.format(i, mdl.ll_per_word))

for k in range(mdl.k):

print('Top 10 words of topic #{}'.format(k))

print(mdl.get_topic_words(k, top_n=10))

mdl.summary()

Performance Of Tomotopy

tomotopy uses Collapsed Gibbs-Sampling(CGS) to infer the distribution of topics and the distribution of words.

Generally CGS converges more slowly than Variational Bayes(VB) that gensim's LdaModel uses, but its iteration can be computed much faster.

In addition, tomotopy can take advantage of multicore CPUs with a SIMD instruction set, which can result in faster iterations.

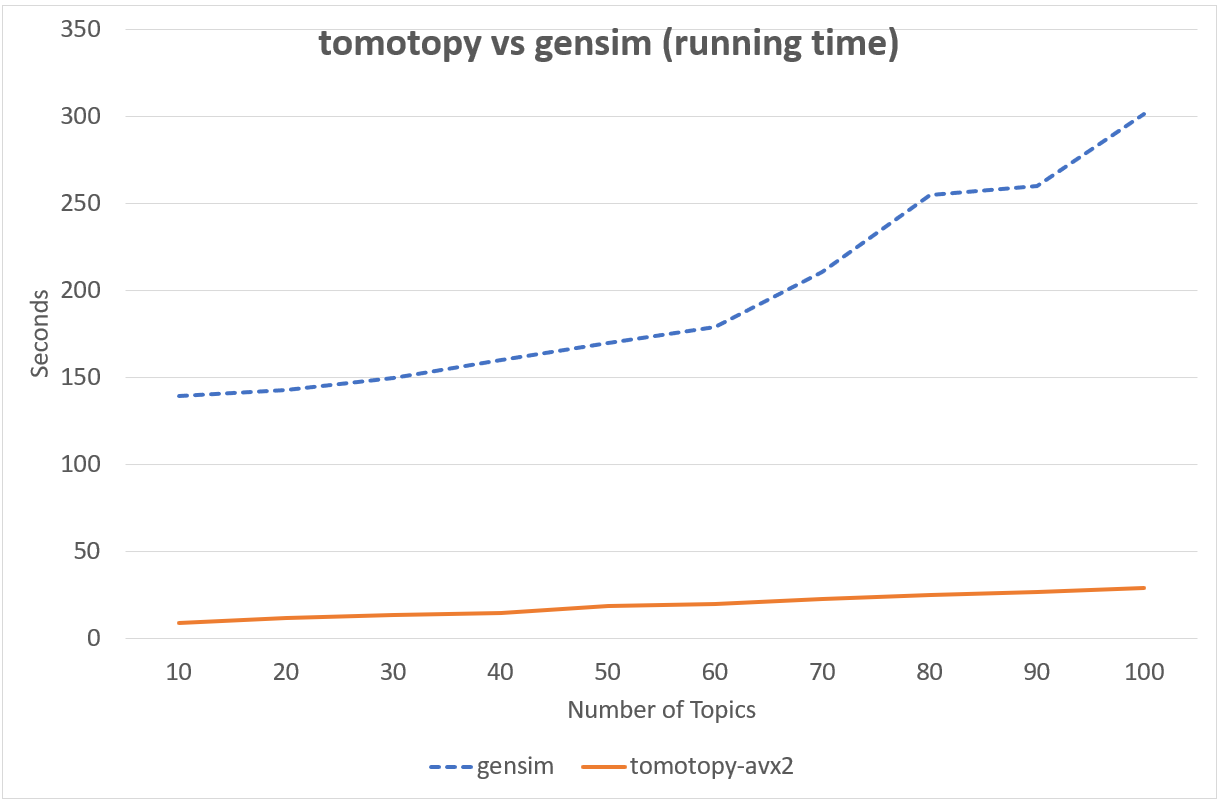

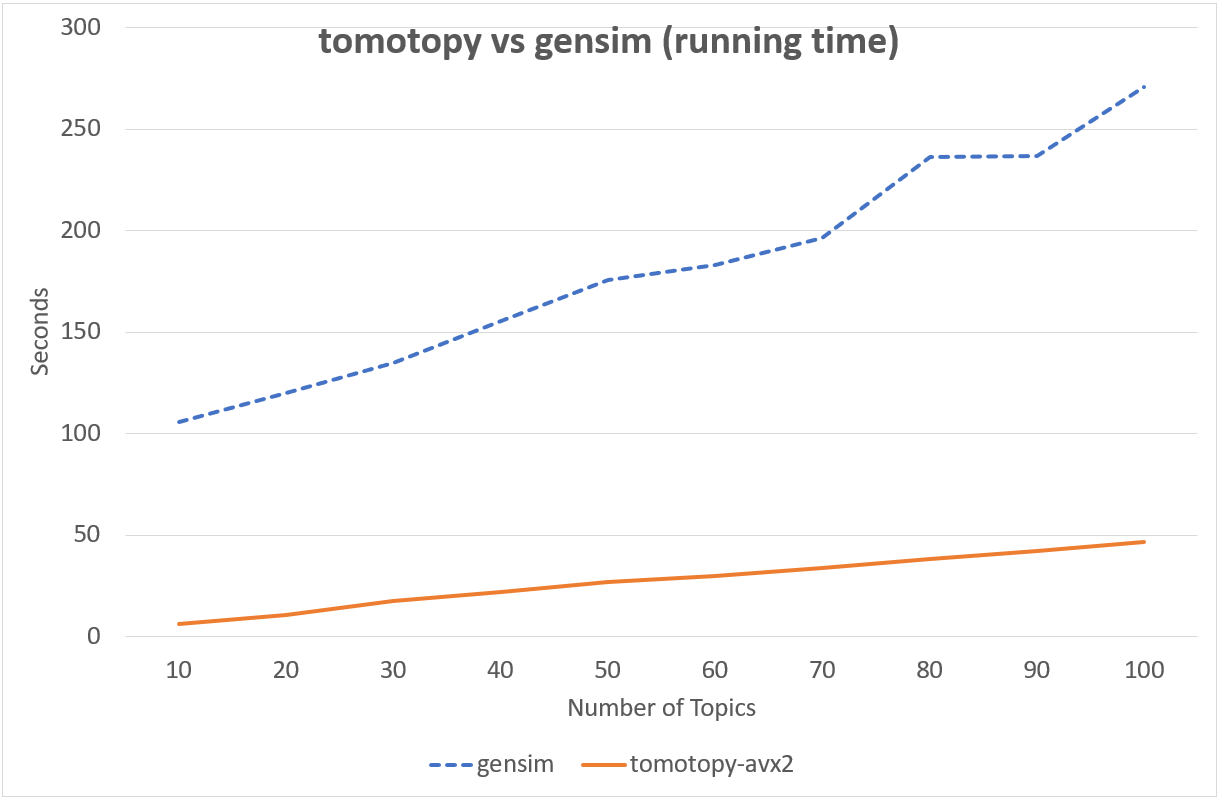

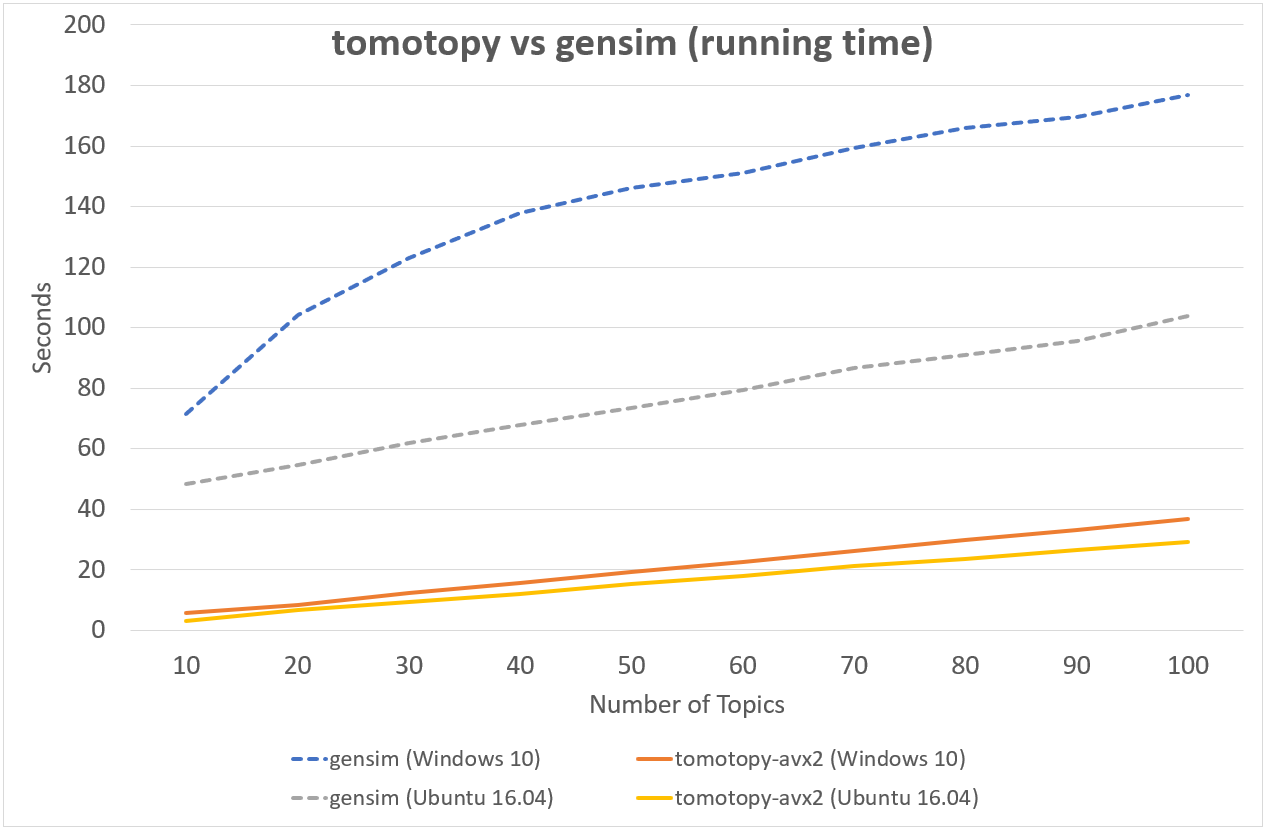

Following chart shows the comparison of LDA model's running time between tomotopy and gensim.

The input data consists of 1000 random documents from English Wikipedia with 1,506,966 words (about 10.1 MB).

tomotopy trains 200 iterations and gensim trains 10 iterations.

↑ Performance in Intel i5-6600, x86-64 (4 cores)

↑ Performance in Intel Xeon E5-2620 v4, x86-64 (8 cores, 16 threads)

↑ Performance in AMD Ryzen7 3700X, x86-64 (8 cores, 16 threads)

Although tomotopy iterated 20 times more, the overall running time was 5~10 times faster than gensim. And it yields a stable result.

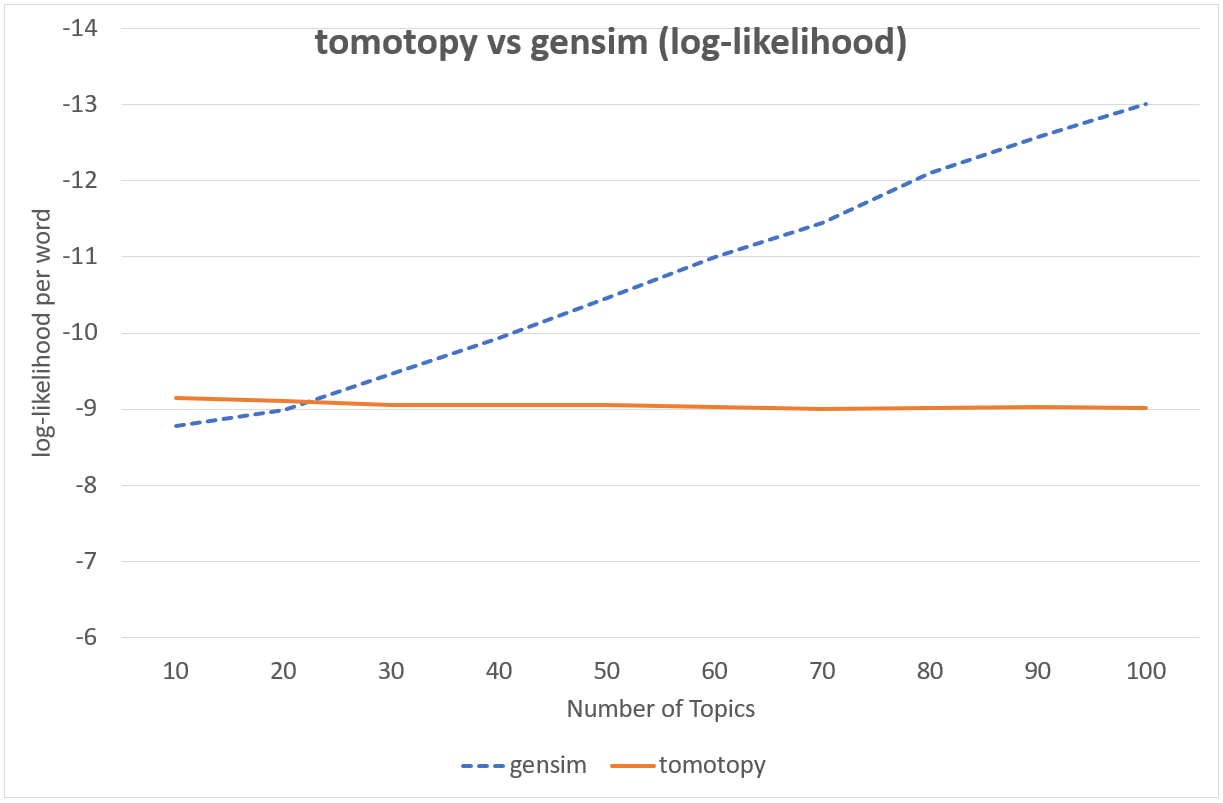

It is difficult to compare CGS and VB directly because they are totaly different techniques. But from a practical point of view, we can compare the speed and the result between them. The following chart shows the log-likelihood per word of two models' result.

Top words of topics generated by tomotopy | |

|---|---|

| #1 | use, acid, cell, form, also, effect |

| #2 | use, number, one, set, comput, function |

| #3 | state, use, may, court, law, person |

| #4 | state, american, nation, parti, new, elect |

| #5 | film, music, play, song, anim, album |

| #6 | art, work, design, de, build, artist |

| #7 | american, player, english, politician, footbal, author |

| #8 | appl, use, comput, system, softwar, compani |

| #9 | day, unit, de, state, german, dutch |

| #10 | team, game, first, club, leagu, play |

| #11 | church, roman, god, greek, centuri, bc |

| #12 | atom, use, star, electron, metal, element |

| #13 | alexand, king, ii, emperor, son, iii |

| #14 | languag, arab, use, word, english, form |

| #15 | speci, island, plant, famili, order, use |

| #16 | work, univers, world, book, human, theori |

| #17 | citi, area, region, popul, south, world |

| #18 | forc, war, armi, militari, jew, countri |

| #19 | year, first, would, later, time, death |

| #20 | apollo, use, aircraft, flight, mission, first |

Top words of topics generated by gensim | |

|---|---|

| #1 | use, acid, may, also, azerbaijan, cell |

| #2 | use, system, comput, one, also, time |

| #3 | state, citi, day, nation, year, area |

| #4 | state, lincoln, american, war, union, bell |

| #5 | anim, game, anal, atari, area, sex |

| #6 | art, use, work, also, includ, first |

| #7 | american, player, english, politician, footbal, author |

| #8 | new, american, team, season, leagu, year |

| #9 | appl, ii, martin, aston, magnitud, star |

| #10 | bc, assyrian, use, speer, also, abort |

| #11 | use, arsen, also, audi, one, first |

| #12 | algebra, use, set, ture, number, tank |

| #13 | appl, state, use, also, includ, product |

| #14 | use, languag, word, arab, also, english |

| #15 | god, work, one, also, greek, name |

| #16 | first, one, also, time, work, film |

| #17 | church, alexand, arab, also, anglican, use |

| #18 | british, american, new, war, armi, alfr |

| #19 | airlin, vote, candid, approv, footbal, air |

| #20 | apollo, mission, lunar, first, crew, land |

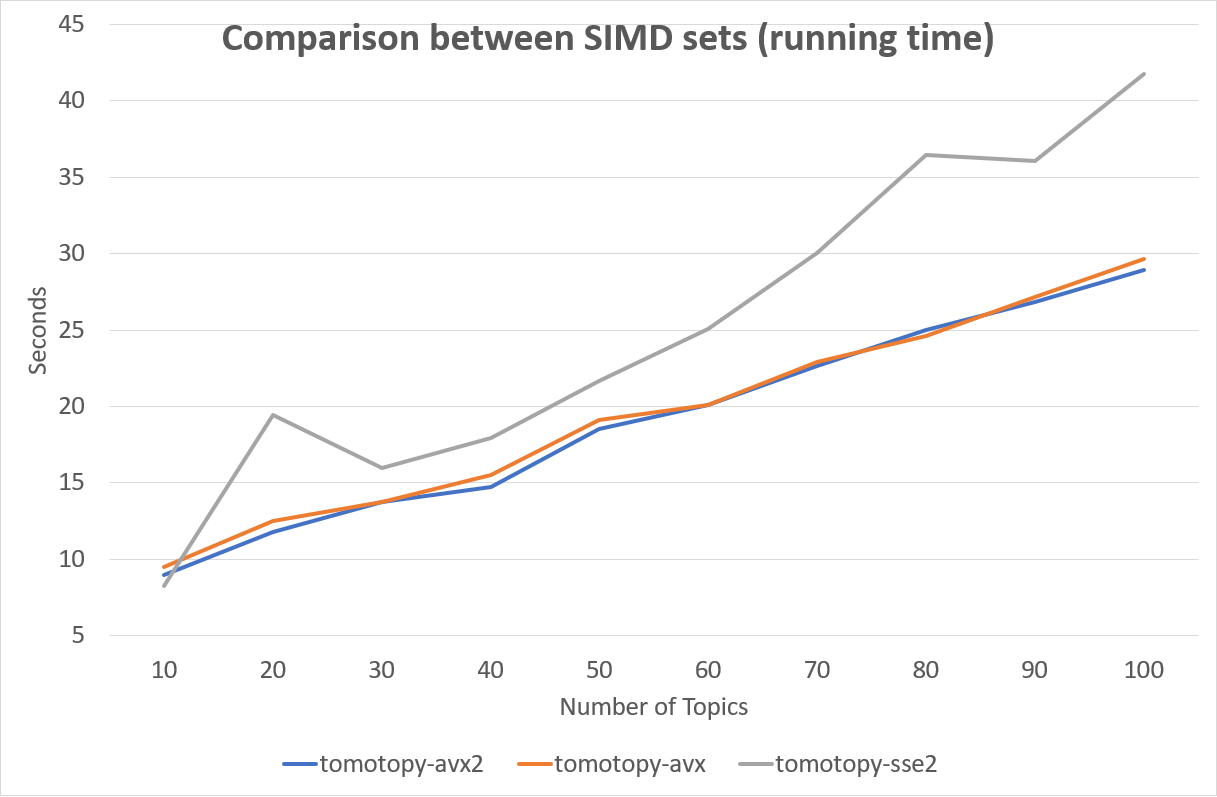

The SIMD instruction set has a great effect on performance. Following is a comparison between SIMD instruction sets.

Fortunately, most of recent x86-64 CPUs provide AVX2 instruction set, so we can enjoy the performance of AVX2.

Vocabulary Controlling Using Cf And Df

CF(collection frequency) and DF(document frequency) are concepts used in information retreival,

and each represents the total number of times the word appears in the corpus

and the number of documents in which the word appears within the corpus, respectively.

tomotopy provides these two measures under the parameters of min_cf and min_df to trim low frequency words when building the corpus.

For example, let's say we have 5 documents #0 ~ #4 which are composed of the following words: ::

#0 : a, b, c, d, e, c

#1 : a, b, e, f

#2 : c, d, c

#3 : a, e, f, g

#4 : a, b, g

Both CF of a and CF of c are 4 because it appears 4 times in the entire corpus.

But DF of a is 4 and DF of c is 2 because a appears in #0, #1, #3 and #4 and c only appears in #0 and #2.

So if we trim low frequency words using min_cf=3, the result becomes follows:

::

(d, f and g are removed.)

#0 : a, b, c, e, c

#1 : a, b, e

#2 : c, c

#3 : a, e

#4 : a, b

However when min_df=3 the result is like :

::

(c, d, f and g are removed.)

#0 : a, b, e

#1 : a, b, e

#2 : (empty doc)

#3 : a, e

#4 : a, b

As we can see, min_df is a stronger criterion than min_cf.

In performing topic modeling, words that appear repeatedly in only one document do not contribute to estimating the topic-word distribution.

So, removing words with low df is a good way to reduce model size while preserving the results of the final model.

In short, please prefer using min_df to min_cf.

Model Save And Load

tomotopy provides save and load method for each topic model class,

so you can save the model into the file whenever you want, and re-load it from the file.

::

import tomotopy as tp

mdl = tp.HDPModel()

for line in open('sample.txt'):

mdl.add_doc(line.strip().split())

for i in range(0, 100, 10):

mdl.train(10)

print('Iteration: {}\tLog-likelihood: {}'.format(i, mdl.ll_per_word))

# save into file

mdl.save('sample_hdp_model.bin')

# load from file

mdl = tp.HDPModel.load('sample_hdp_model.bin')

for k in range(mdl.k):

if not mdl.is_live_topic(k): continue

print('Top 10 words of topic #{}'.format(k))

print(mdl.get_topic_words(k, top_n=10))

# the saved model is HDP model,

# so when you load it by LDA model, it will raise an exception

mdl = tp.LDAModel.load('sample_hdp_model.bin')

When you load the model from a file, a model type in the file should match the class of methods.

See more at LDAModel.save() and LDAModel.load() methods.

Documents In The Model And Out Of The Model

We can use Topic Model for two major purposes. The basic one is to discover topics from a set of documents as a result of trained model, and the more advanced one is to infer topic distributions for unseen documents by using trained model.

We named the document in the former purpose (used for model training) as document in the model, and the document in the later purpose (unseen document during training) as document out of the model.

In tomotopy, these two different kinds of document are generated differently.

A document in the model can be created by LDAModel.add_doc() method.

add_doc can be called before LDAModel.train() starts.

In other words, after train called, add_doc cannot add a document into the model because the set of document used for training has become fixed.

To acquire the instance of the created document, you should use LDAModel.docs like:

::

mdl = tp.LDAModel(k=20)

idx = mdl.add_doc(words)

if idx < 0: raise RuntimeError("Failed to add doc")

doc_inst = mdl.docs[idx]

# doc_inst is an instance of the added document

A document out of the model is generated by LDAModel.make_doc() method. make_doc can be called only after train starts.

If you use make_doc before the set of document used for training has become fixed, you may get wrong results.

Since make_doc returns the instance directly, you can use its return value for other manipulations.

::

mdl = tp.LDAModel(k=20)

# add_doc ...

mdl.train(100)

doc_inst = mdl.make_doc(unseen_doc) # doc_inst is an instance of the unseen document

Inference For Unseen Documents

If a new document is created by LDAModel.make_doc(), its topic distribution can be inferred by the model.

Inference for unseen document should be performed using LDAModel.infer() method.

::

mdl = tp.LDAModel(k=20)

# add_doc ...

mdl.train(100)

doc_inst = mdl.make_doc(unseen_doc)

topic_dist, ll = mdl.infer(doc_inst)

print("Topic Distribution for Unseen Docs: ", topic_dist)

print("Log-likelihood of inference: ", ll)

The infer method can infer only one instance of Document or a list of instances of Document.

See more at LDAModel.infer().

Corpus And Transform

Every topic model in tomotopy has its own internal document type.

A document can be created and added into suitable for each model through each model's add_doc method.

However, trying to add the same list of documents to different models becomes quite inconvenient,

because add_doc should be called for the same list of documents to each different model.

Thus, tomotopy provides Corpus class that holds a list of documents.

Corpus can be inserted into any model by passing as argument corpus to __init__ or add_corpus method of each model.

So, inserting Corpus just has the same effect to inserting documents the corpus holds.

Some topic models requires different data for its documents.

For example, DMRModel requires argument metadata in str type,

but PLDAModel requires argument labels in List[str] type.

Since Corpus holds an independent set of documents rather than being tied to a specific topic model,

data types required by a topic model may be inconsistent when a corpus is added into that topic model.

In this case, miscellaneous data can be transformed to be fitted target topic model using argument transform.

See more details in the following code:

::

from tomotopy import DMRModel

from tomotopy.utils import Corpus

corpus = Corpus()

corpus.add_doc("a b c d e".split(), a_data=1)

corpus.add_doc("e f g h i".split(), a_data=2)

corpus.add_doc("i j k l m".split(), a_data=3)

model = DMRModel(k=10)

model.add_corpus(corpus)

# You lose <code>a\_data</code> field in <code>corpus</code>,

# and <code>metadata</code> that <code>DMRModel</code> requires is filled with the default value, empty str.

assert model.docs[0].metadata == ''

assert model.docs[1].metadata == ''

assert model.docs[2].metadata == ''

def transform_a_data_to_metadata(misc: dict):

return {'metadata': str(misc['a_data'])}

# this function transforms <code>a\_data</code> to <code>metadata</code>

model = DMRModel(k=10)

model.add_corpus(corpus, transform=transform_a_data_to_metadata)

# Now docs in <code>model</code> has non-default <code>metadata</code>, that generated from <code>a\_data</code> field.

assert model.docs[0].metadata == '1'

assert model.docs[1].metadata == '2'

assert model.docs[2].metadata == '3'

Parallel Sampling Algorithms

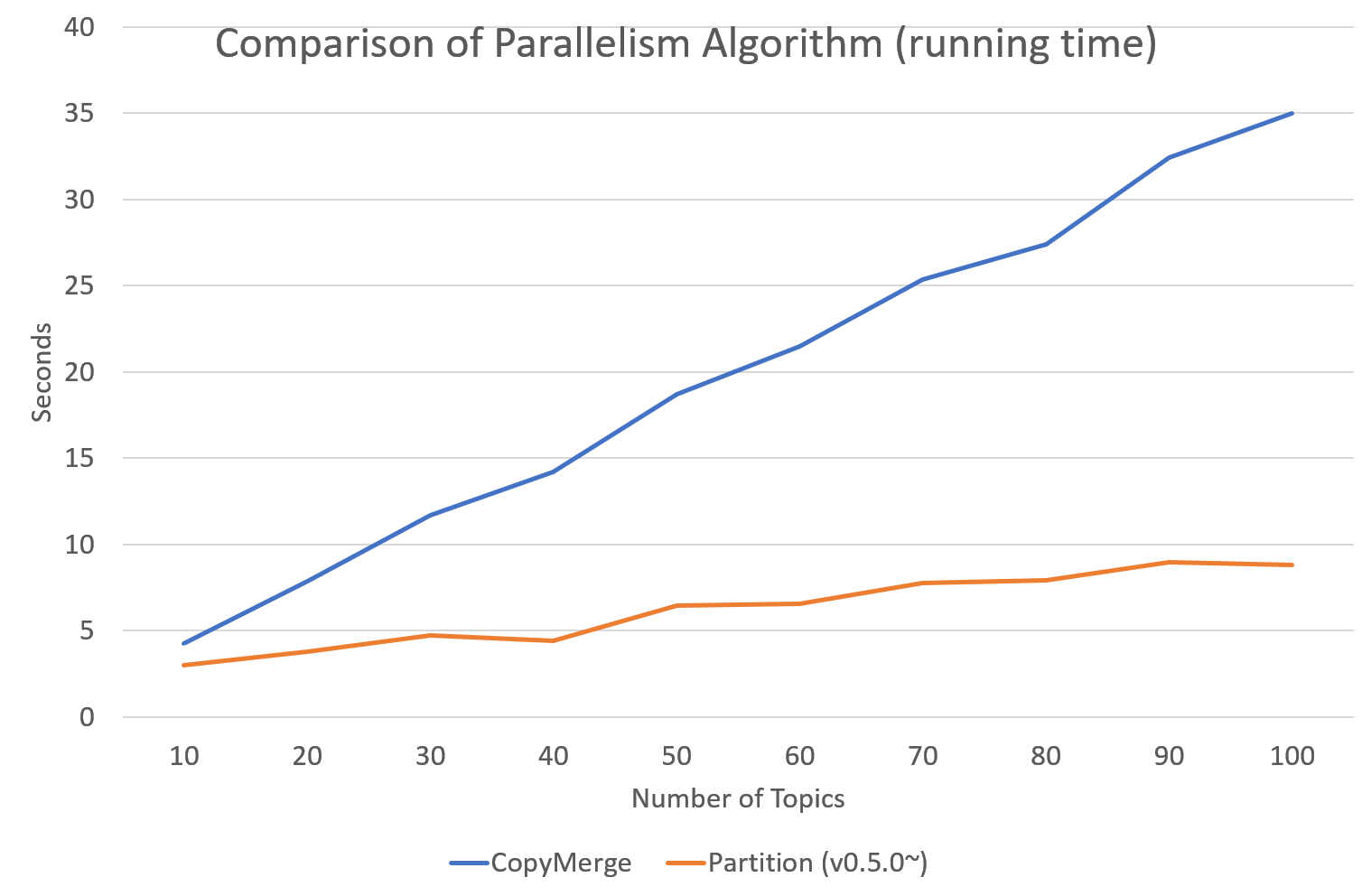

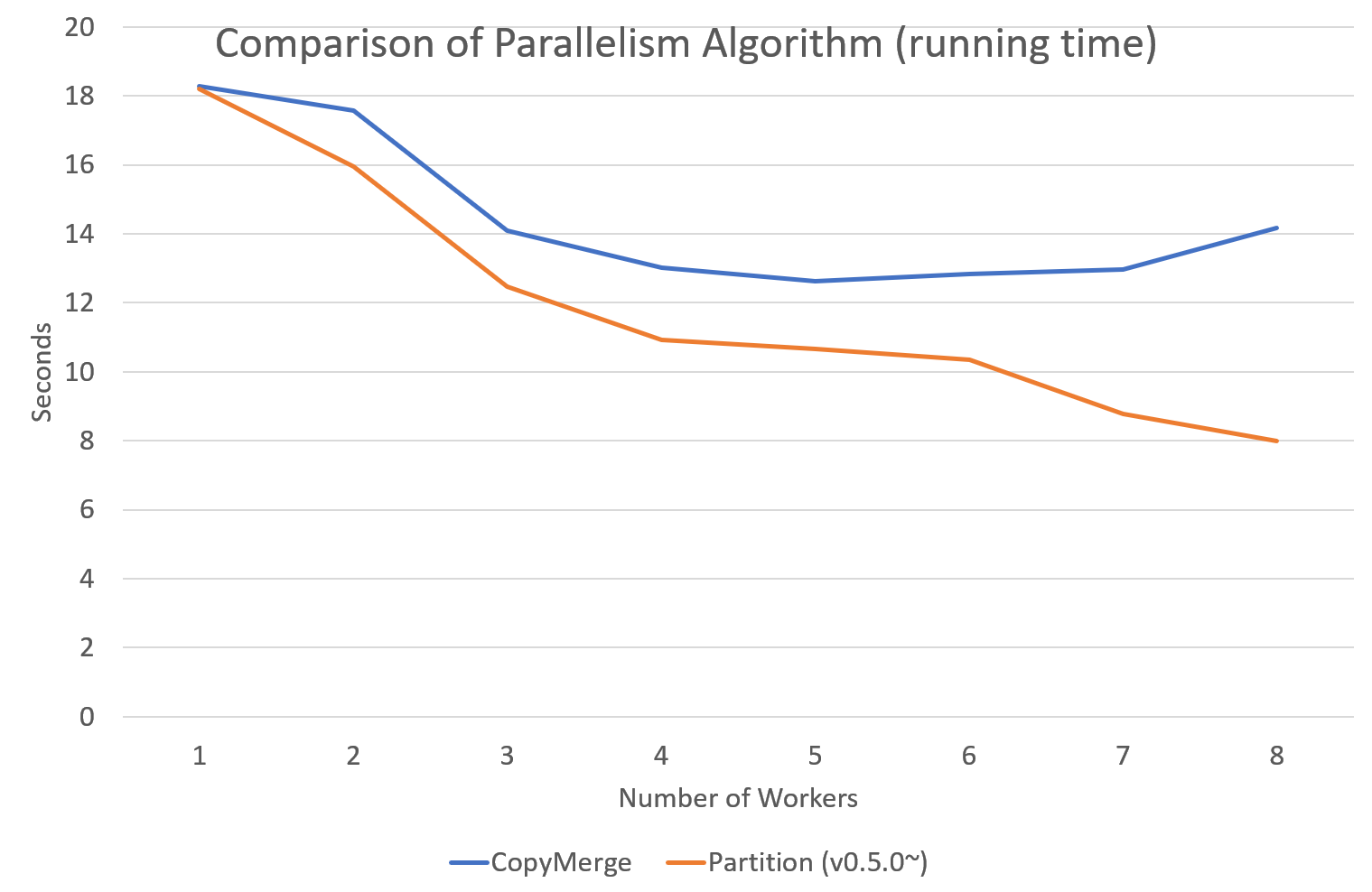

Since version 0.5.0, tomotopy allows you to choose a parallelism algorithm.

The algorithm provided in versions prior to 0.4.2 is COPY_MERGE, which is provided for all topic models.

The new algorithm PARTITION, available since 0.5.0, makes training generally faster and more memory-efficient, but it is available at not all topic models.

The following chart shows the speed difference between the two algorithms based on the number of topics and the number of workers.

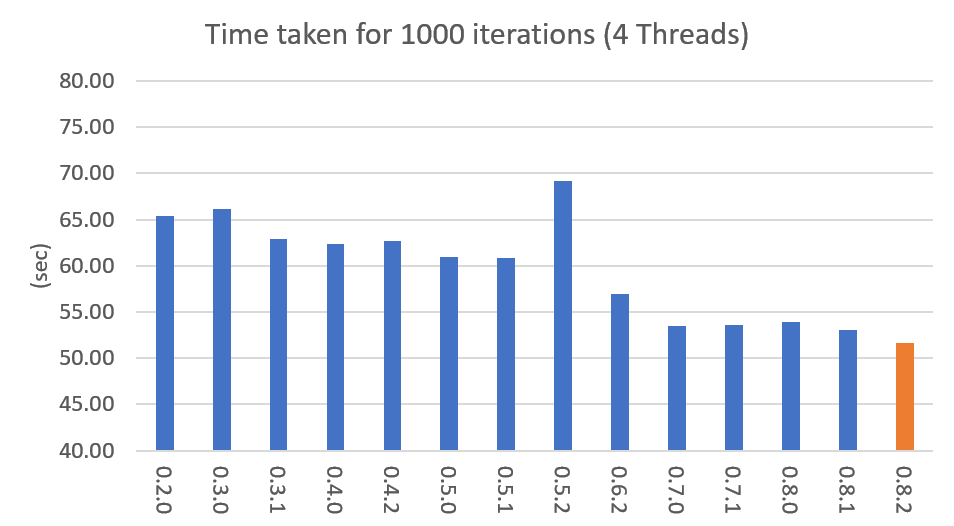

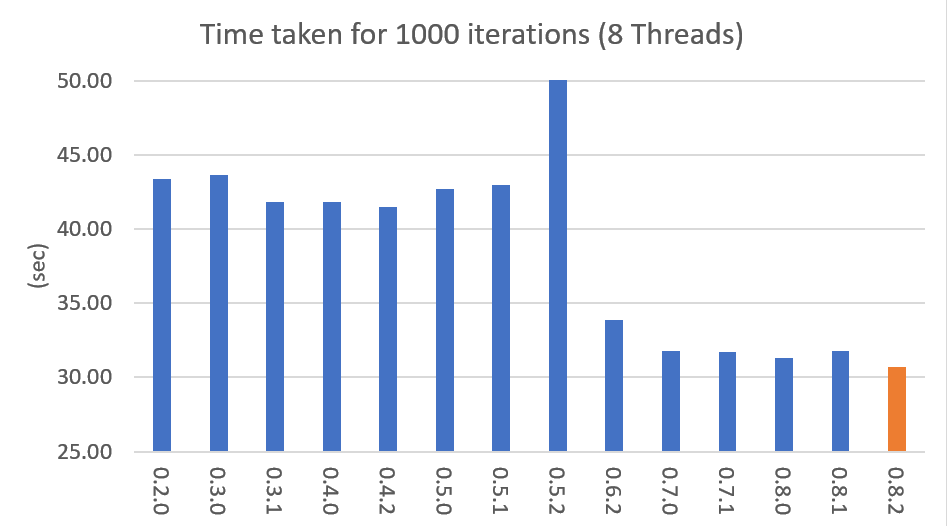

Performance By Version

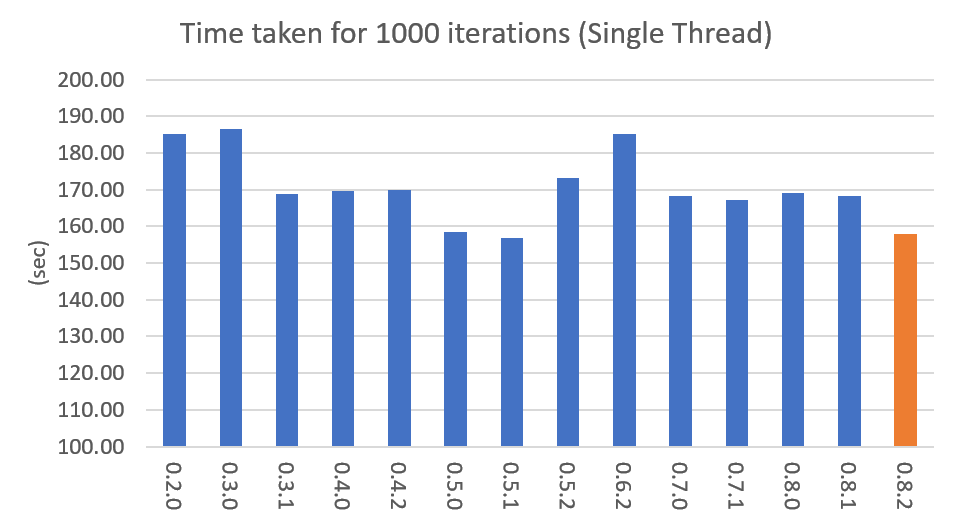

Performance changes by version are shown in the following graph. The time it takes to run the LDA model train with 1000 iteration was measured. (Docs: 11314, Vocab: 60382, Words: 2364724, Intel Xeon Gold 5120 @2.2GHz)

Pining Topics Using Word Priors

Since version 0.6.0, a new method LDAModel.set_word_prior() has been added. It allows you to control word prior for each topic.

For example, we can set the weight of the word 'church' to 1.0 in topic 0, and the weight to 0.1 in the rest of the topics by following codes.

This means that the probability that the word 'church' is assigned to topic 0 is 10 times higher than the probability of being assigned to another topic.

Therefore, most of 'church' is assigned to topic 0, so topic 0 contains many words related to 'church'.

This allows to manipulate some topics to be placed at a specific topic number.

::

import tomotopy as tp

mdl = tp.LDAModel(k=20)

# add documents into <code>mdl</code>

# setting word prior

mdl.set_word_prior('church', [1.0 if k == 0 else 0.1 for k in range(20)])

See word_prior_example in example.py for more details.

Examples

You can find an example python code of tomotopy at https://github.com/bab2min/tomotopy/blob/main/examples/ .

You can also get the data file used in the example code at https://drive.google.com/file/d/18OpNijd4iwPyYZ2O7pQoPyeTAKEXa71J/view .

License

tomotopy is licensed under the terms of MIT License,

meaning you can use it for any reasonable purpose and remain in complete ownership of all the documentation you produce.

History

-

0.14.0 (2026-02-21)

- New features

- Now AVX512 instruction set is supported for x86-64 architecture. If your CPU supports AVX512, you can enjoy much faster performance.

- AVX only mode is removed.

- Now tomotopy supports Python3 Stable ABI, so you can use the same tomotopy package for Python 3.9 and later.

- Now tomotopy supports numpy 2.0 and later.

- New features

-

0.13.0 (2024-08-05)

- New features

- Major features of Topic Model Viewer

tomotopy.viewer.open_viewer()are ready now. LDAModel.get_hash()is added. You can get 128bit hash value of the model.- Add an argument

ngram_listtoSimpleTokenizer.

- Major features of Topic Model Viewer

- Bug fixes

- Fixed inconsistent

spansbug afterCorpus.concat_ngramsis called. - Optimized the bottleneck of

LDAModel.load()andLDAModel.save()and improved its speed more than 10 times.

- Fixed inconsistent

- New features

-

0.12.7 (2023-12-19)

- New features

- Added Topic Model Viewer

tomotopy.viewer.open_viewer() - Optimized the performance of

Corpus.process()

- Added Topic Model Viewer

- Bug fixes

Document.spannow returns the ranges in character unit, not in byte unit.

- New features

-

0.12.6 (2023-12-11)

- New features

- Added some convenience features to

LDAModel.train()andLDAModel.set_word_prior(). LDAModel.trainnow has new argumentscallback,callback_intervalandshow_progresto monitor the training progress.LDAModel.set_word_priornow can acceptDict[int, float]type as its argumentprior.

- Added some convenience features to

- New features

-

0.12.5 (2023-08-03)

- New features

- Added support for Linux ARM64 architecture.

- New features

-

0.12.4 (2023-01-22)

- New features

- Added support for macOS ARM64 architecture.

- Bug fixes

- Fixed an issue where

Document.get_sub_topic_dist()raises a bad argument exception. - Fixed an issue where exception raising sometimes causes crashes.

- Fixed an issue where

- New features

-

0.12.3 (2022-07-19)

- New features

- Now, inserting an empty document using

LDAModel.add_doc()just ignores it instead of raising an exception. If the newly added argumentignore_empty_wordsis set to False, an exception is raised as before. HDPModel.purge_dead_topics()method is added to remove non-live topics from the model.

- Now, inserting an empty document using

- Bug fixes

- Fixed an issue that prevents setting user defined values for nuSq in

SLDAModel(by @jucendrero). - Fixed an issue where

Coherencedid not work forDTModel. - Fixed an issue that often crashed when calling

make_dic()before callingtrain(). - Resolved the problem that the results of

DMRModelandGDMRModelare different even when the seed is fixed. - The parameter optimization process of

DMRModelandGDMRModelhas been improved. - Fixed an issue that sometimes crashed when calling

LDAModel.copy().

- Fixed an issue that prevents setting user defined values for nuSq in

- New features

-

0.12.2 (2021-09-06)

- An issue where calling

convert_to_ldaofHDPModelwithmin_cf > 0,min_df > 0orrm_top > 0causes a crash has been fixed. - A new argument

from_pseudo_docis added toDocument.get_topics()andDocument.get_topic_dist(). This argument is only valid for documents ofPTModel, it enables to control a source for computing topic distribution. - A default value for argument

pofPTModelhas been changed. The new default value isk * 10. - Using documents generated by

make_docwithout callinginferdoesn't cause a crash anymore, but just print warning messages. - An issue where the internal C++ code isn't compiled at clang c++17 environment has been fixed.

- An issue where calling

-

0.12.1 (2021-06-20)

- An issue where

LDAModel.set_word_prior()causes a crash has been fixed. - Now

LDAModel.perplexityandLDAModel.ll_per_wordreturn the accurate value whenTermWeightis notONE. LDAModel.used_vocab_weighted_freqwas added, which returns term-weighted frequencies of words.- Now

LDAModel.summary()shows not only the entropy of words, but also the entropy of term-weighted words.

- An issue where

-

0.12.0 (2021-04-26)

- Now

DMRModelandGDMRModelsupport multiple values of metadata (see https://github.com/bab2min/tomotopy/blob/main/examples/dmr_multi_label.py ) - The performance of

GDMRModelwas improved. - A

copy()method has been added for all topic models to do a deep copy. - An issue was fixed where words that are excluded from training (by

min_cf,min_df) have incorrect topic id. Now all excluded words have-1as topic id. - Now all exceptions and warnings that generated by

tomotopyfollow standard Python types. - Compiler requirements have been raised to C++14.

- Now

-

0.11.1 (2021-03-28)

- A critical bug of asymmetric alphas was fixed. Due to this bug, version 0.11.0 has been removed from releases.

-

0.11.0 (2021-03-26) (removed)

- A new topic model

PTModelfor short texts was added into the package. - An issue was fixed where

LDAModel.infer()causes a segmentation fault sometimes. - A mismatch of numpy API version was fixed.

- Now asymmetric document-topic priors are supported.

- Serializing topic models to

bytesin memory is supported. - An argument

normalizewas added toget_topic_dist(),get_topic_word_dist()andget_sub_topic_dist()for controlling normalization of results. - Now

DMRModel.lambdasandDMRModel.alphagive correct values. - Categorical metadata supports for

GDMRModelwere added (see https://github.com/bab2min/tomotopy/blob/main/examples/gdmr_both_categorical_and_numerical.py ). - Python3.5 support was dropped.

- A new topic model

-

0.10.2 (2021-02-16)

- An issue was fixed where

LDAModel.train()fails with large K. - An issue was fixed where

Corpusloses theiruidvalues.

- An issue was fixed where

-

0.10.1 (2021-02-14)

- An issue was fixed where

Corpus.extract_ngrams()craches with empty input. - An issue was fixed where

LDAModel.infer()raises exception with valid input. - An issue was fixed where

LDAModel.infer()generates wrongDocument.paths. - Since a new parameter

freeze_topicsforLDAModel.train()was added, you can control whether to create a new topic or not when training.

- An issue was fixed where

-

0.10.0 (2020-12-19)

- The interface of

Corpusand ofLDAModel.docswere unified. Now you can access the document in corpus with the same manner. - getitem of

Corpuswas improved. Not only indexing by int, but also by Iterable[int], slicing are supported. Also indexing by uid is supported. - New methods

Corpus.extract_ngrams()andCorpus.concat_ngrams()were added. They extracts n-gram collocations using PMI and concatenates them into a single words. - A new method

LDAModel.add_corpus()was added, andLDAModel.infer()can receive corpus as input. - A new module

tomotopy.coherencewas added. It provides the way to calculate coherence of the model. - A paramter

window_sizewas added toFoRelevance. - An issue was fixed where NaN often occurs when training

HDPModel. - Now Python3.9 is supported.

- A dependency to py-cpuinfo was removed and the initializing of the module was improved.

- The interface of

-

0.9.1 (2020-08-08)

- Memory leaks of version 0.9.0 was fixed.

LDAModel.summary()was fixed.

-

0.9.0 (2020-08-04)

- The

LDAModel.summary()method, which prints human-readable summary of the model, has been added. - The random number generator of package has been replaced with EigenRand. It speeds up the random number generation and solves the result difference between platforms.

- Due to above, even if

seedis the same, the model training result may be different from the version before 0.9.0. - Fixed a training error in

HDPModel. DMRModel.alphanow shows Dirichlet prior of per-document topic distribution by metadata.DTModel.get_count_by_topics()has been modified to return a 2-dimensionalndarray.DTModel.alphahas been modified to return the same value asDTModel.get_alpha().- Fixed an issue where the

metadatavalue could not be obtained for the document ofGDMRModel. HLDAModel.alphanow shows Dirichlet prior of per-document depth distribution.LDAModel.global_stephas been added.LDAModel.get_count_by_topics()now returns the word count for both global and local topics.PAModel.alpha,PAModel.subalpha, andPAModel.get_count_by_super_topic()have been added.

- The

-

0.8.2 (2020-07-14)

- New properties

DTModel.num_timepointsandDTModel.num_docs_by_timepointhave been added. - A bug which causes different results with the different platform even if

seedswere the same was partially fixed. As a result of this fix, nowtomotopyin 32 bit yields different training results from earlier version.

- New properties

-

0.8.1 (2020-06-08)

- A bug where

LDAModel.used_vocabsreturned an incorrect value was fixed. - Now

CTModel.prior_covreturns a covariance matrix with shape[k, k]. - Now

CTModel.get_correlations()with empty arguments returns a correlation matrix with shape[k, k].

- A bug where

-

0.8.0 (2020-06-06)

- Since NumPy was introduced in tomotopy, many methods and properties of tomotopy return not just

list, butnumpy.ndarraynow. - Tomotopy has a new dependency

NumPy >= 1.10.0. - A wrong estimation of

LDAModel.infer()was fixed. - A new method about converting HDPModel to LDAModel was added.

- New properties including

LDAModel.used_vocabs,LDAModel.used_vocab_freqandLDAModel.used_vocab_dfwere added into topic models. - A new g-DMR topic model(

GDMRModel) was added. - An error at initializing

FoRelevancein macOS was fixed. - An error that occured when using

Corpuscreated withoutrawparameters was fixed.

- Since NumPy was introduced in tomotopy, many methods and properties of tomotopy return not just

-

0.7.1 (2020-05-08)

Document.pathswas added forHLDAModel.- A memory corruption bug in

PMIExtractorwas fixed. - A compile error in gcc 7 was fixed.

-

0.7.0 (2020-04-18)

DTModelwas added into the package.- A bug in

Corpus.save()was fixed. - A new method

Document.get_count_vector()was added into Document class. - Now linux distributions use manylinux2010 and an additional optimization is applied.

-

0.6.2 (2020-03-28)

- A critical bug related to

saveandloadwas fixed. Version 0.6.0 and 0.6.1 have been removed from releases.

- A critical bug related to

-

0.6.1 (2020-03-22) (removed)

- A bug related to module loading was fixed.

-

0.6.0 (2020-03-22) (removed)

Corpusclass that manages multiple documents easily was added.LDAModel.set_word_prior()method that controls word-topic priors of topic models was added.- A new argument

min_dfthat filters words based on document frequency was added into every topic model's init. tomotopy.label, the submodule about topic labeling was added. Currently, onlyFoRelevanceis provided.

-

0.5.2 (2020-03-01)

- A segmentation fault problem was fixed in

LLDAModel.add_doc(). - A bug was fixed that

inferofHDPModelsometimes crashes the program. - A crash issue was fixed of

LDAModel.infer()with ps=tomotopy.ParallelScheme.PARTITION, together=True.

- A segmentation fault problem was fixed in

-

0.5.1 (2020-01-11)

- A bug was fixed that

SLDAModel.make_doc()doesn't support missing values fory. - Now

SLDAModelfully supports missing values for response variablesy. Documents with missing values (NaN) are included in modeling topic, but excluded from regression of response variables.

- A bug was fixed that

-

0.5.0 (2019-12-30)

- Now

PAModel.infer()returns both topic distribution nd sub-topic distribution. - New methods get_sub_topics and get_sub_topic_dist were added into

Document. (for PAModel) - New parameter

parallelwas added forLDAModel.train()andLDAModel.infer()method. You can select parallelism algorithm by changing this parameter. ParallelScheme.PARTITION, a new algorithm, was added. It works efficiently when the number of workers is large, the number of topics or the size of vocabulary is big.- A bug where

rm_topdidn't work atmin_cf< 2 was fixed.

- Now

-

0.4.2 (2019-11-30)

-

0.4.1 (2019-11-27)

- A bug at init function of

PLDAModelwas fixed.

- A bug at init function of

-

0.4.0 (2019-11-18)

-

0.3.1 (2019-11-05)

- An issue where

get_topic_dist()returns incorrect value whenmin_cforrm_topis set was fixed. - The return value of

get_topic_dist()ofMGLDAModeldocument was fixed to include local topics. - The estimation speed with

tw=ONEwas improved.

- An issue where

-

0.3.0 (2019-10-06)

- A new model,

LLDAModelwas added into the package. - A crashing issue of

HDPModelwas fixed. - Since hyperparameter estimation for

HDPModelwas implemented, the result ofHDPModelmay differ from previous versions. If you want to turn off hyperparameter estimation of HDPModel, setoptim_intervalto zero.

- A new model,

-

0.2.0 (2019-08-18)

- New models including

CTModelandSLDAModelwere added into the package. - A new parameter option

rm_topwas added for all topic models. - The problems in

saveandloadmethod forPAModelandHPAModelwere fixed. - An occassional crash in loading

HDPModelwas fixed. - The problem that

ll_per_wordwas calculated incorrectly whenmin_cf> 0 was fixed.

- New models including

-

0.1.6 (2019-08-09)

- Compiling errors at clang with macOS environment were fixed.

-

0.1.4 (2019-08-05)

- The issue when

add_docreceives an empty list as input was fixed. - The issue that

PAModel.get_topic_words()doesn't extract the word distribution of subtopic was fixed.

- The issue when

-

0.1.3 (2019-05-19)

- The parameter

min_cfand its stopword-removing function were added for all topic models.

- The parameter

-

0.1.0 (2019-05-12)

- First version of tomotopy

Sub-modules

tomotopy.coherence-

Added in version: 0.10.0 …

tomotopy.label-

Submodule

tomotopy.labelprovides automatic topic labeling techniques. You can label topics automatically with simple code like below. The results … tomotopy.models-

Submodule

tomotopy.modelsprovides various topic model classes. All models are based onLDAModel, which implements the basic … tomotopy.utils-

Submodule

tomotopy.utilsprovides various utilities for topic modeling.Corpusclass helps manage multiple documents easily. The … tomotopy.viewer-

Added in version: 0.13.0 …

Functions

def load_model(path: str) ‑> LDAModel-

Expand source code

def load_model(path:str) -> 'LDAModel': ''' ..versionadded:: 0.13.0 Load any topic model from the given file path. Parameters ---------- path : str The file path to load the model from. Returns ------- model : LDAModel or its subclass ''' model_types = _get_all_model_types() for model_type in model_types: try: return model_type.load(path) except: pass raise ValueError(f'Cannot load model from {path}')Added in version: 0.13.0

Load any topic model from the given file path.

Parameters

path:str- The file path to load the model from.

Returns

model:LDAModelorits subclass

def loads_model(data: bytes) ‑> LDAModel-

Expand source code

def loads_model(data:bytes) -> 'LDAModel': ''' ..versionadded:: 0.13.0 Load any topic model from the given bytes data. Parameters ---------- data : bytes The bytes data to load the model from. Returns ------- model : LDAModel or its subclass ''' model_types = _get_all_model_types() for model_type in model_types: try: return model_type.loads(data) except: pass raise ValueError(f'Cannot load model from the given data')Added in version: 0.13.0

Load any topic model from the given bytes data.

Parameters

data:bytes- The bytes data to load the model from.

Returns

model:LDAModelorits subclass

Classes

class ParallelScheme (*args, **kwds)-

Expand source code

class ParallelScheme(IntEnum): """ This enumeration is for Parallelizing Scheme: There are three options for parallelizing and the basic one is DEFAULT. Not all models support all options. """ DEFAULT = 0 """tomotopy chooses the best available parallelism scheme for your model""" NONE = 1 """ Turn off multi-threading for Gibbs sampling at training or inference. Operations other than Gibbs sampling may use multithreading. """ COPY_MERGE = 2 """ Use Copy and Merge algorithm from AD-LDA. It consumes RAM in proportion to the number of workers. This has advantages when you have a small number of workers and a small number of topics and vocabulary sizes in the model. Prior to version 0.5, all models used this algorithm by default. > * Newman, D., Asuncion, A., Smyth, P., & Welling, M. (2009). Distributed algorithms for topic models. Journal of Machine Learning Research, 10(Aug), 1801-1828. """ PARTITION = 3 """ Use Partitioning algorithm from PCGS. It consumes only twice as much RAM as a single-threaded algorithm, regardless of the number of workers. This has advantages when you have a large number of workers or a large number of topics and vocabulary sizes in the model. > * Yan, F., Xu, N., & Qi, Y. (2009). Parallel inference for latent dirichlet allocation on graphics processing units. In Advances in neural information processing systems (pp. 2134-2142). """This enumeration is for Parallelizing Scheme: There are three options for parallelizing and the basic one is DEFAULT. Not all models support all options.

Ancestors

- enum.IntEnum

- builtins.int

- enum.ReprEnum

- enum.Enum

Class variables

var COPY_MERGE-

Use Copy and Merge algorithm from AD-LDA. It consumes RAM in proportion to the number of workers. This has advantages when you have a small number of workers and a small number of topics and vocabulary sizes in the model. Prior to version 0.5, all models used this algorithm by default.

- Newman, D., Asuncion, A., Smyth, P., & Welling, M. (2009). Distributed algorithms for topic models. Journal of Machine Learning Research, 10(Aug), 1801-1828.

var DEFAULT-

tomotopy chooses the best available parallelism scheme for your model

var NONE-

Turn off multi-threading for Gibbs sampling at training or inference. Operations other than Gibbs sampling may use multithreading.

var PARTITION-

Use Partitioning algorithm from PCGS. It consumes only twice as much RAM as a single-threaded algorithm, regardless of the number of workers. This has advantages when you have a large number of workers or a large number of topics and vocabulary sizes in the model.

- Yan, F., Xu, N., & Qi, Y. (2009). Parallel inference for latent dirichlet allocation on graphics processing units. In Advances in neural information processing systems (pp. 2134-2142).

class TermWeight (*args, **kwds)-

Expand source code

class TermWeight(IntEnum): """ This enumeration is for Term Weighting Scheme and it is based on the following paper: > * Wilson, A. T., & Chew, P. A. (2010, June). Term weighting schemes for latent dirichlet allocation. In human language technologies: The 2010 annual conference of the North American Chapter of the Association for Computational Linguistics (pp. 465-473). Association for Computational Linguistics. There are three options for term weighting and the basic one is ONE. The others can also be applied to all topic models in `tomotopy`. """ ONE = 0 """ Consider every term equal (default)""" IDF = 1 """ Use Inverse Document Frequency term weighting. Thus, a term occurring in almost every document has very low weighting and a term occurring in a few documents has high weighting. """ PMI = 2 """ Use Pointwise Mutual Information term weighting. """This enumeration is for Term Weighting Scheme and it is based on the following paper:

- Wilson, A. T., & Chew, P. A. (2010, June). Term weighting schemes for latent dirichlet allocation. In human language technologies: The 2010 annual conference of the North American Chapter of the Association for Computational Linguistics (pp. 465-473). Association for Computational Linguistics.

There are three options for term weighting and the basic one is ONE. The others can also be applied to all topic models in

tomotopy.Ancestors

- enum.IntEnum

- builtins.int

- enum.ReprEnum

- enum.Enum

Class variables

var IDF-

Use Inverse Document Frequency term weighting.

Thus, a term occurring in almost every document has very low weighting and a term occurring in a few documents has high weighting.

var ONE-

Consider every term equal (default)

var PMI-

Use Pointwise Mutual Information term weighting.